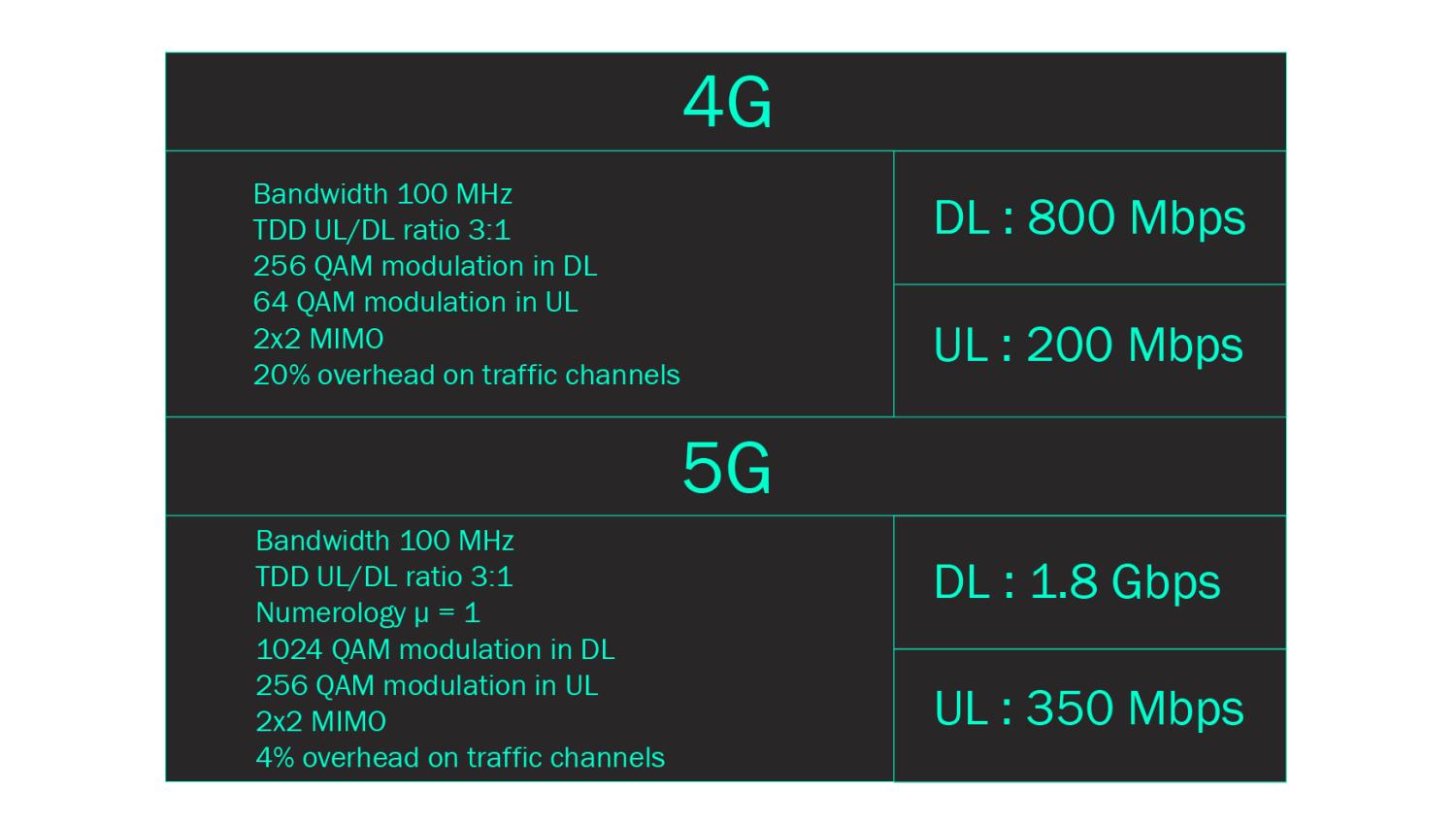

Comparative spectral efficiency of 4G and 5G

The 5G incorporates developments that reduce the amount of radio resources not directly allocated to user data transport.

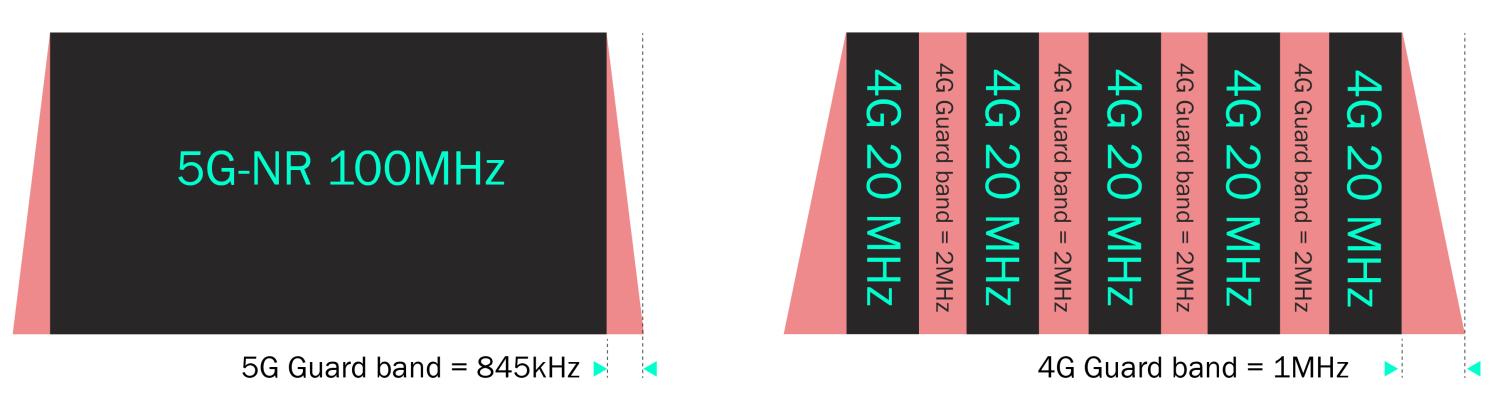

Reduced radio guard bands : These guard bands generally make it possible to protect adjacent frequency networks against interference, at the cost of reducing the quantity of radio resources that can be used by the end user.

In 4G, the guard bands represent 10% of the maximum 20MHz bandwidth, i.e. 2 MHz. What's more, to obtain an equivalent band of 100MHz, we'd have to aggregate 5 bands of 20MHz, modulated independently to maintain compatibility with older terminals, and therefore also aggregate the 2MHz guard bands mentioned above. This would give a total of 10MHz of unusable spectrum!

In 5G, these guardbands are significantly reduced compared with previous generations thanks to technological advances in band filtering. Their width depends on the spacing between OFDM subcarriers and the total bandwidth. So, for a 100MHz band and 30kHz subcarrier spacing, we get two 845kHz guardbands, giving a total of 1.7MHz. Significantly less than the 10MHz of non-exploitable spectrum in 4G!

5G and 4G guard bands comparison

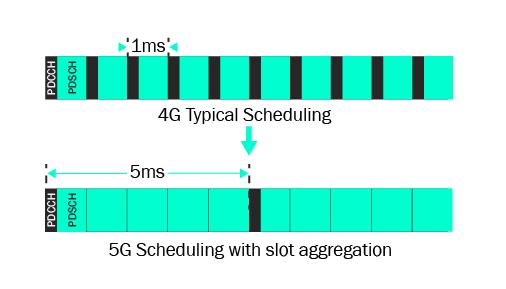

Reduced signaling overhead : In a cellular network, radio resources are allocated by the network to the terminals under coverage, on the basis of radio metrics associated with the propagation channel and the quality of service targeted for each user. This allocation mechanism requires communication, in the form of signalling messages, between the network and the terminals, and also uses de facto resources that cannot be assigned to user traffic.

In 4G, these signalling messages systematically consume 3 OFDM symbols in each 1ms radio sub-frame comprising 14 OFDM symbols. So, in 4G, the radio resource allocation mechanism consumes 5 x 3 OFDM symbols every 5ms = 14 x 5 = 70 OFDM symbols. While in 5G, an aggregation mechanism enables these signalling messages to be transmitted less frequently. Thus, considering an aggregation of 5 slots and an OFDM subcarrier spacing of 30kHz as before, the resource allocation mechanism consumes 2 x 3 OFDM symbols every 5ms = 2 x 14 x 5 (slots) = 140 OFDM symbols. So finally, the signalling overhead induced in 4G represents around 20% of total capacity, compared with 4% in 5G.

Radio resource allocation signaling overhead

In conclusion, the spectral efficiency gain achieved in 5G allows for significantly higher data rates than in 4G. The theoretical resulting data rates that can be calculated at the physical layer, taking into account the differences mentioned above, are those shown in the table opposite.